Artificial Intelligence is rapidly becoming the brain of governments, corporations, and critical infrastructure. But as AI adoption accelerates, so does its exposure to attack.

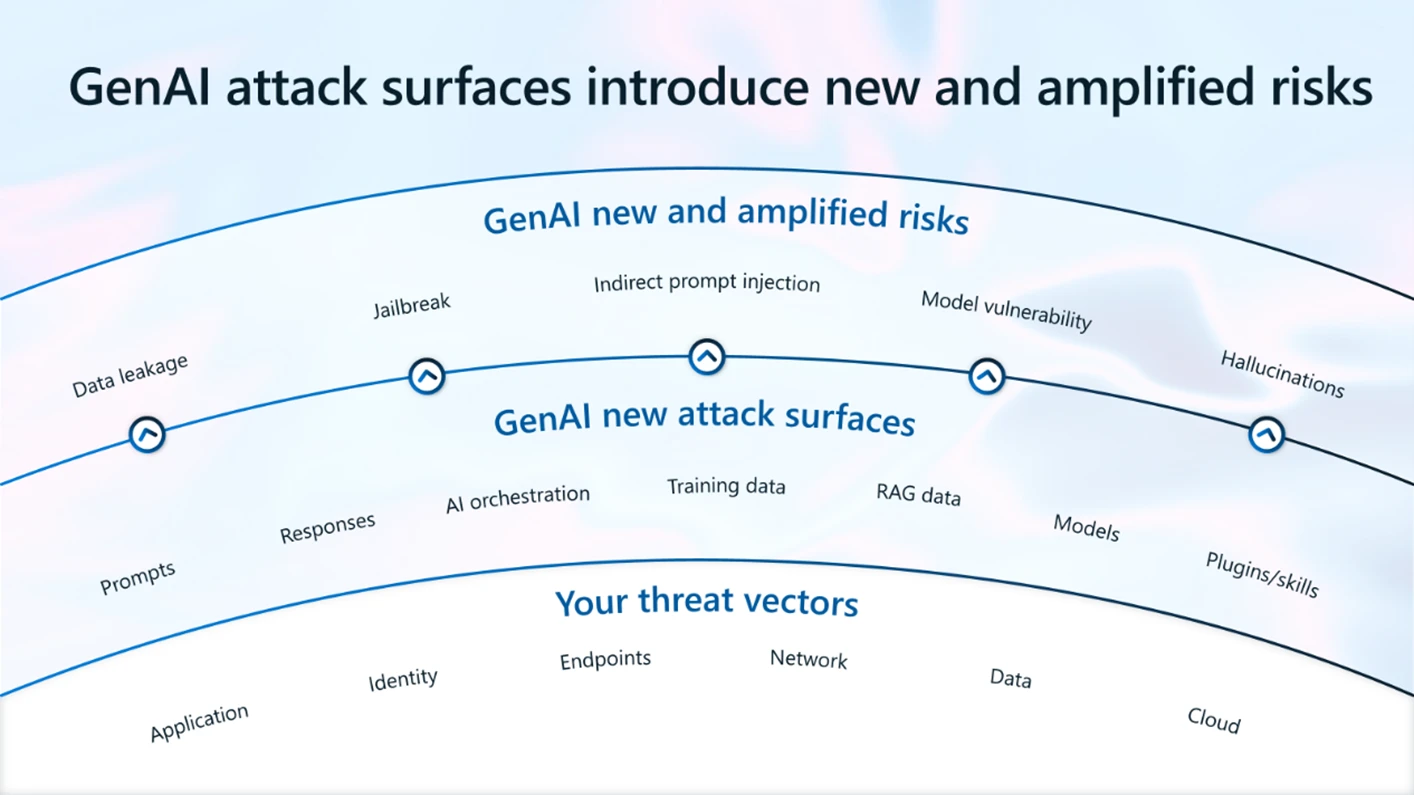

Unlike traditional software, AI systems don’t just execute code, they interpret language, learn from data, and make probabilistic decisions. This creates entirely new attack surfaces that security teams were never trained to defend.

This article maps the five core AI attack surfaces shaping the next era of cyber conflict:

- Prompt Injection & Input Manipulation

- Adversarial Attacks & Model Evasion

- Data Poisoning & Model Leakage

- Exploitable Bias & Blind Spots

- Black Box Failures & Undetectable Breaches

Together, these form the foundation of AI-native cyberwarfare.

1. Prompt Injection & Input Manipulation

AI systems rely on user input as their primary control surface. This makes them uniquely vulnerable to linguistic attacks rather than technical exploits.

Prompt injection occurs when an attacker crafts inputs that override system instructions, bypass safeguards, or manipulate model behavior.

Examples:

- Forcing chatbots to reveal confidential data

- Tricking AI into generating malicious code

- Circumventing content filters

- Extracting system prompts or internal logic

Unlike SQL injection, prompt injection exploits meaning, context, and persuasion, not syntax.

This turns language itself into an attack vector.

Why it’s dangerous:

- No authentication required

- Hard to detect

- Scales instantly

- Works across platforms

AI systems that interact with emails, documents, customer chats, or APIs become exposed gateways into enterprise workflows.

2. Adversarial Attacks & Model Evasion

Adversarial attacks manipulate inputs so that AI systems misclassify or misunderstand reality.

A tiny, almost invisible change to data can cause catastrophic errors.

Examples:

- Slight pixel changes causing image recognition to fail

- Altered audio commands controlling voice systems

- Modified malware that bypasses AI detection

- Fake faces defeating biometric systems

These attacks don’t break the model, they weaponize its math.

AI security tools trained to detect threats can themselves be evaded using AI-generated adversarial samples, creating a recursive arms race.

Strategic impact:

- Military drones misidentify targets

- Surveillance systems fail

- Fraud detection collapses

- Medical diagnostics become unreliable

This is not hacking code.

It is hacking perception.

3. Data Poisoning & Model Leakage

AI learns from data. Whoever controls the data controls the model.

Data poisoning injects malicious samples into training or fine-tuning datasets to subtly alter behavior.

Examples:

- Teaching models hidden backdoors

- Biasing decision systems

- Inserting trigger phrases that activate harmful outputs

- Corrupting open-source datasets

Model leakage occurs when attackers extract:

- Training data

- Private information

- Model weights

- Proprietary logic

Through repeated queries, attackers can reverse-engineer what the AI has learned.

Why this matters:

- Intellectual property theft

- Privacy violations

- Persistent backdoors

- Long-term compromise

A poisoned model can remain compromised for years without detection.

4. Exploitable Bias & Blind Spots

AI systems reflect their training data, including its gaps and distortions.

Attackers exploit:

- Cultural bias

- Language gaps

- Rare edge cases

- Underrepresented scenarios

These blind spots allow manipulation and evasion.

Examples:

- Fraud patterns unseen in training data

- Political or ideological manipulation

- Misclassification of foreign languages

- Social engineering amplified by AI trust

Bias is no longer just an ethics issue.

It is a security vulnerability.

Adversaries weaponize what the model does not understand.

5. Black Box Failures & Undetectable Breaches

Modern AI systems are opaque. Even their creators cannot fully explain how decisions are made.

This creates the most dangerous attack surface: invisible failure.

When an AI system is compromised:

- It may continue to function normally

- It may hallucinate subtly

- It may leak data gradually

- It may obey hidden triggers

No alarms go off.

These breaches are:

- Hard to audit

- Hard to prove

- Hard to attribute

- Hard to recover from

A black-box system can be weaponized without ever appearing “hacked.”

Why AI Attack Surfaces Matter Geopolitically

AI attack surfaces are now:

- Accessible

- Cheap

- Scalable

- Borderless

This allows:

- State-backed actors

- Hacktivists

- Criminal syndicates

- Lone operators

to wage influence, espionage, and sabotage without bombs or missiles.

Cyberwarfare is evolving into cognitive warfare:

- Manipulating models

- Corrupting decision engines

- Shaping automated judgments

The battlefield is no longer networks alone.

The Strategic Shift: From Software Security to Intelligence Security

Traditional cybersecurity defends:

- Code

- Networks

- Devices

AI security must defend:

- Meaning

- Training data

- Reasoning

- Outputs

- Trust

The new perimeter is not firewalls.

Organizations that deploy AI without securing these attack surfaces risk:

- Data breaches

- System manipulation

- Regulatory collapse

- National security exposure

Outro: The New War Is Against Thinking Machines

AI attack surfaces redefine what it means to be vulnerable.

Attacks no longer target machines alone, they target:

- Perception

- Judgment

- Decisions

- Reality

Every AI system deployed without adversarial thinking becomes a potential weapon in someone else’s hands.

The future of security is not just about preventing intrusions.

And intelligence has never been so exposed.